Multi-GPU and distributed training using Horovod in Amazon SageMaker Pipe mode | AWS Machine Learning Blog

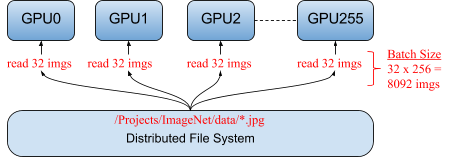

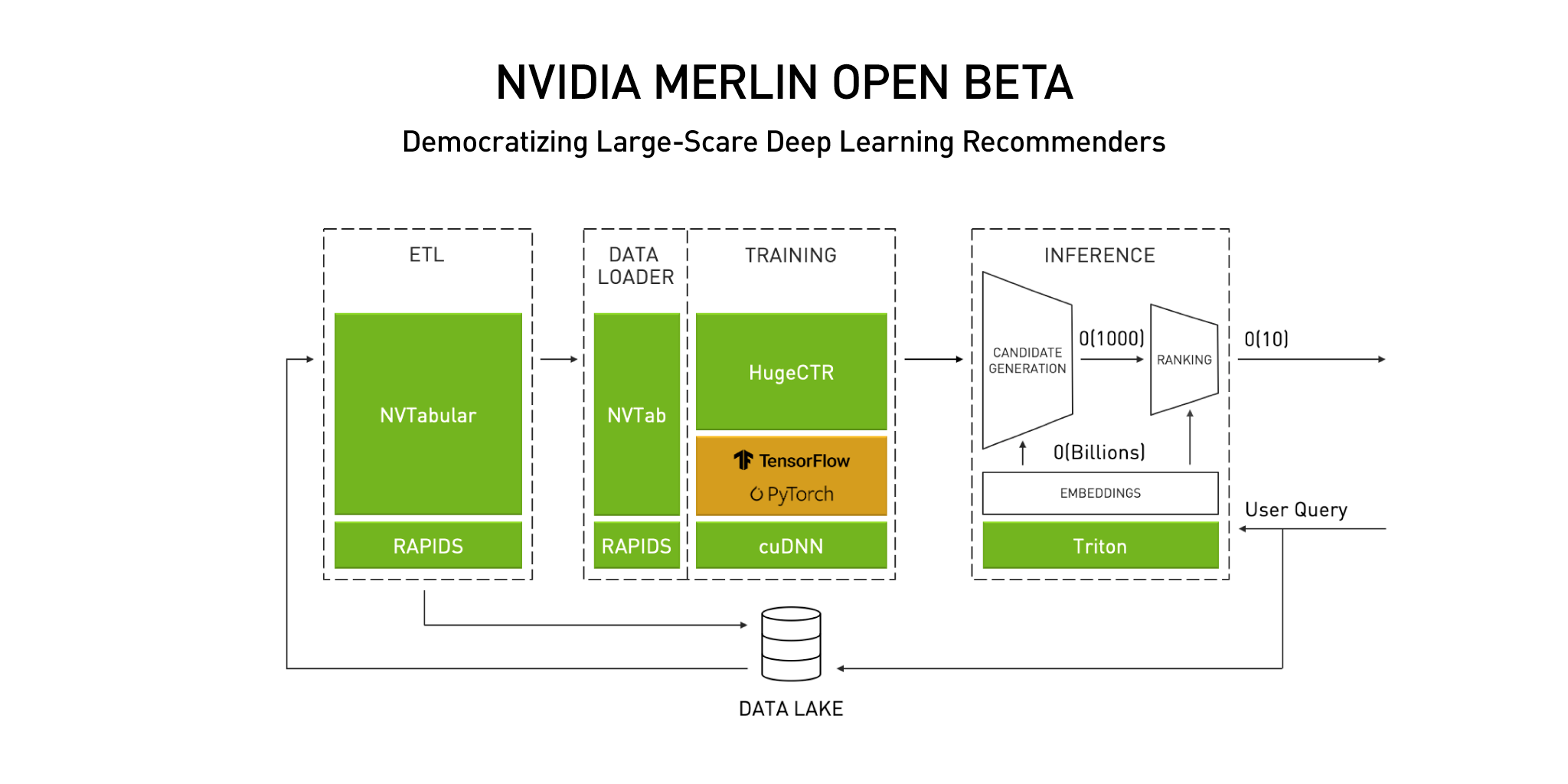

Announcing the NVIDIA NVTabular Open Beta with Multi-GPU Support and New Data Loaders | NVIDIA Technical Blog

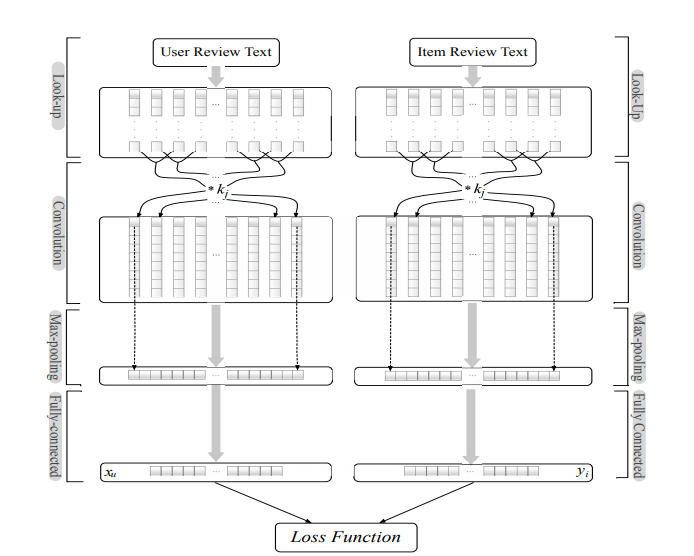

Figure 2 from 2.5D Deep Learning For CT Image Reconstruction Using A Multi- GPU Implementation | Semantic Scholar

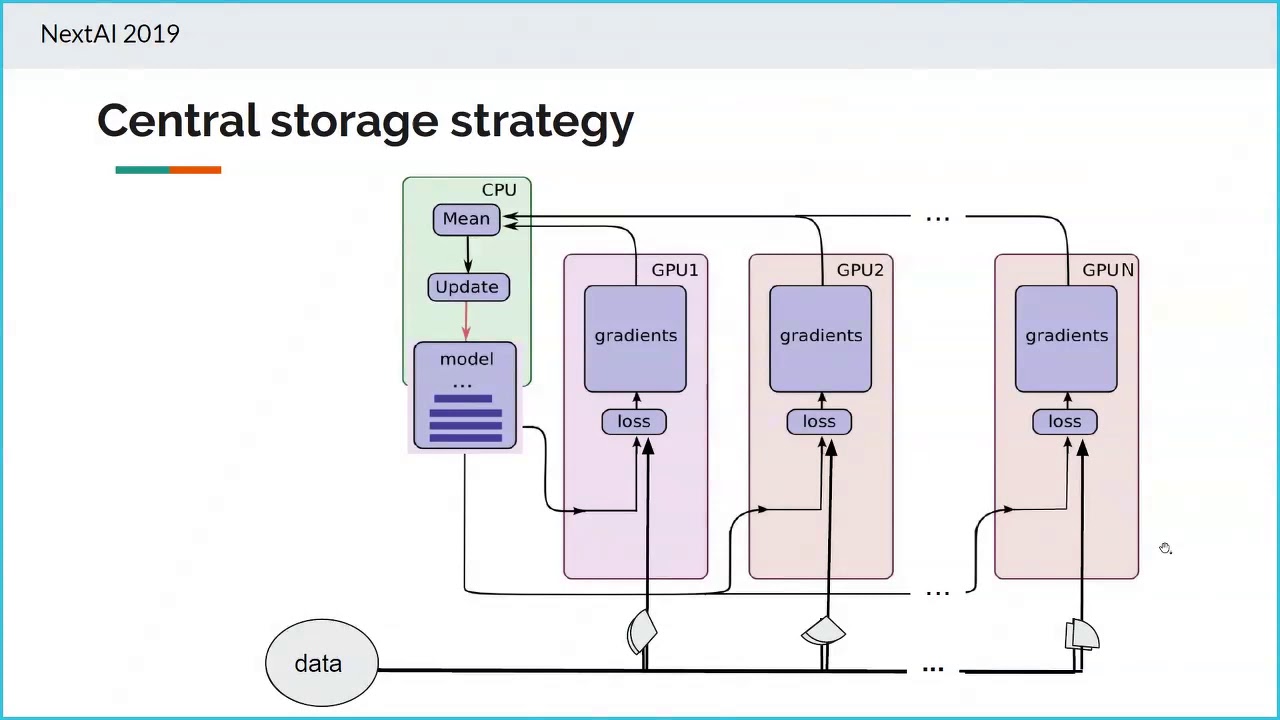

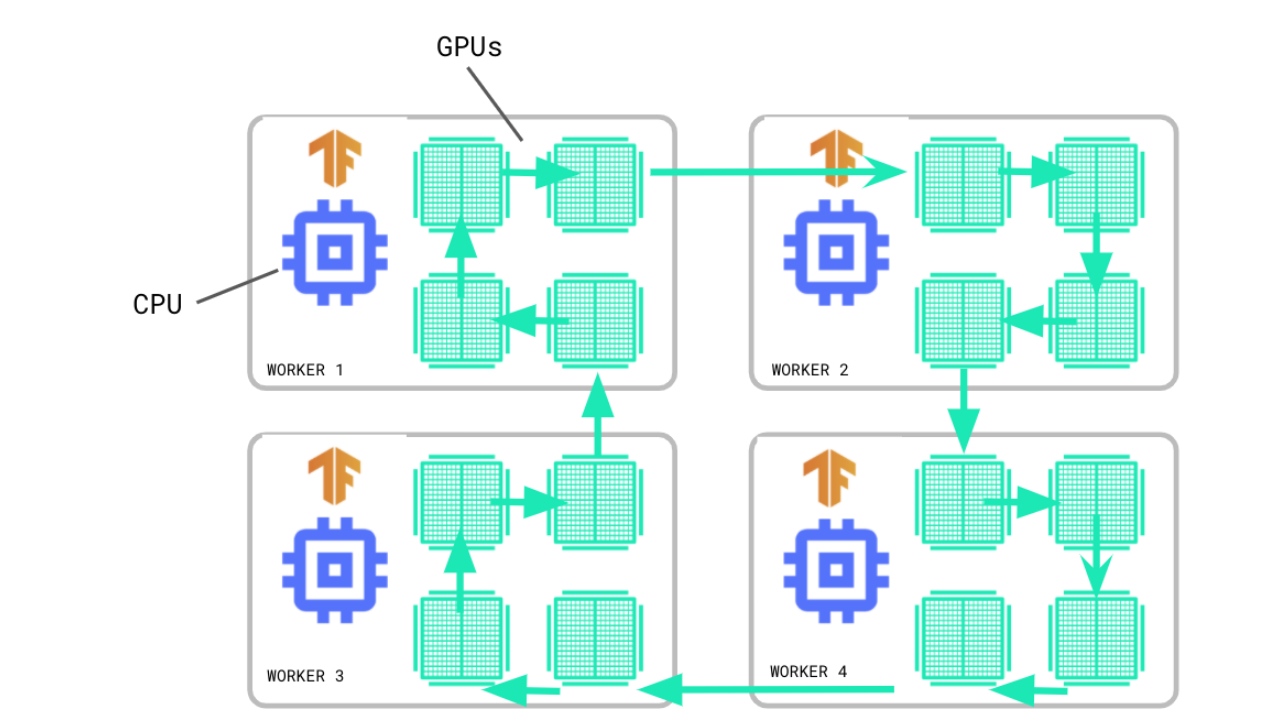

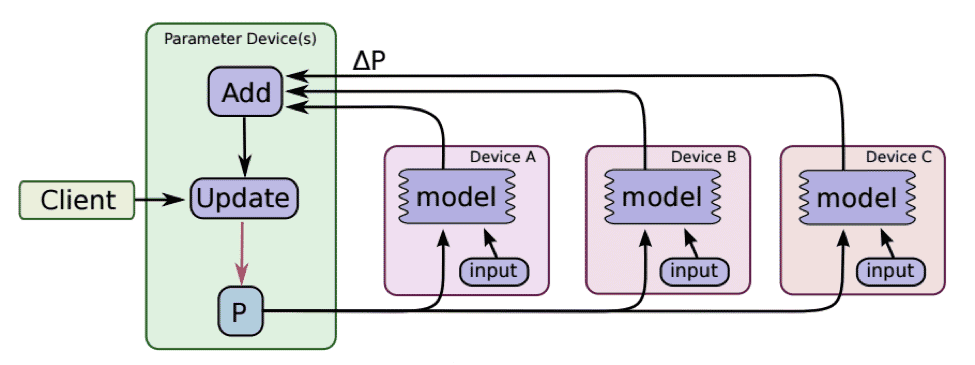

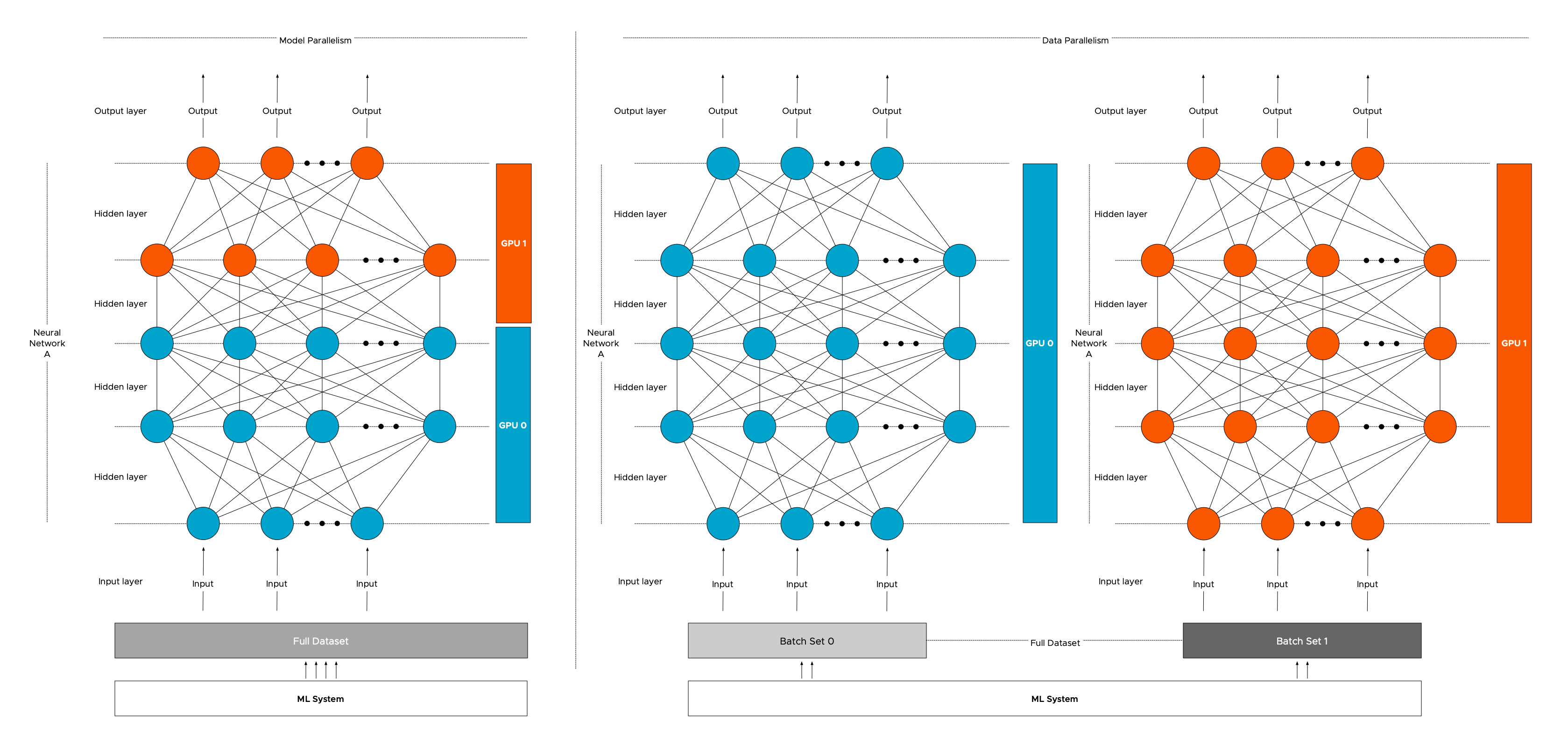

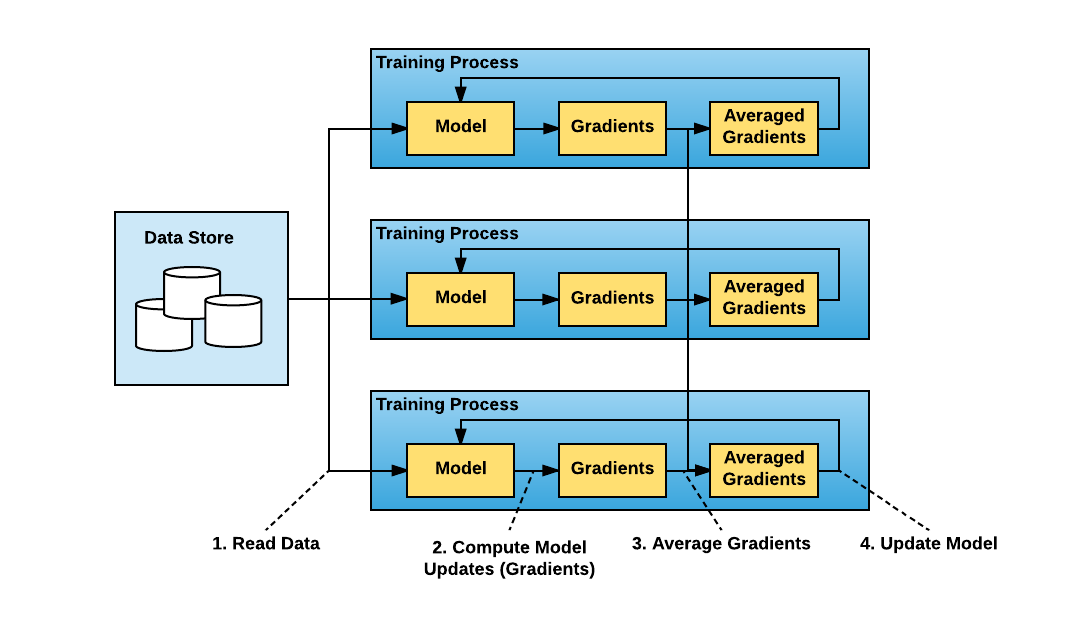

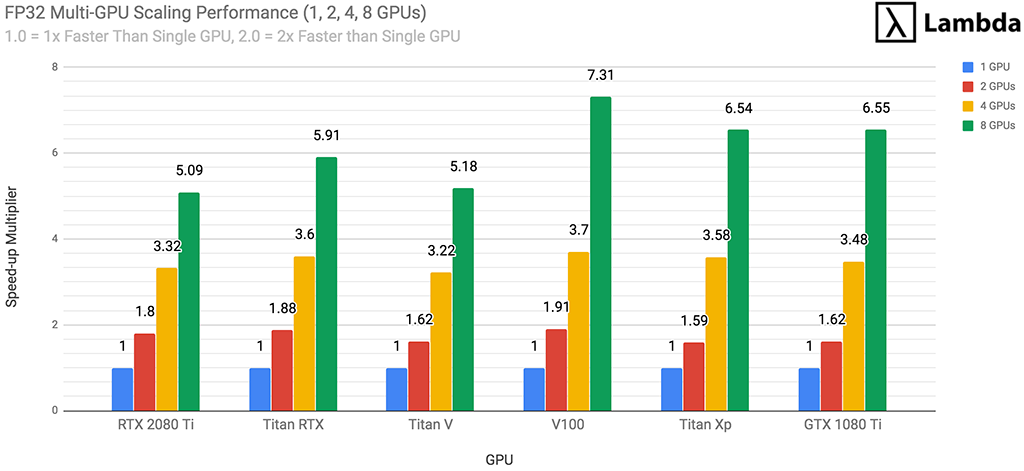

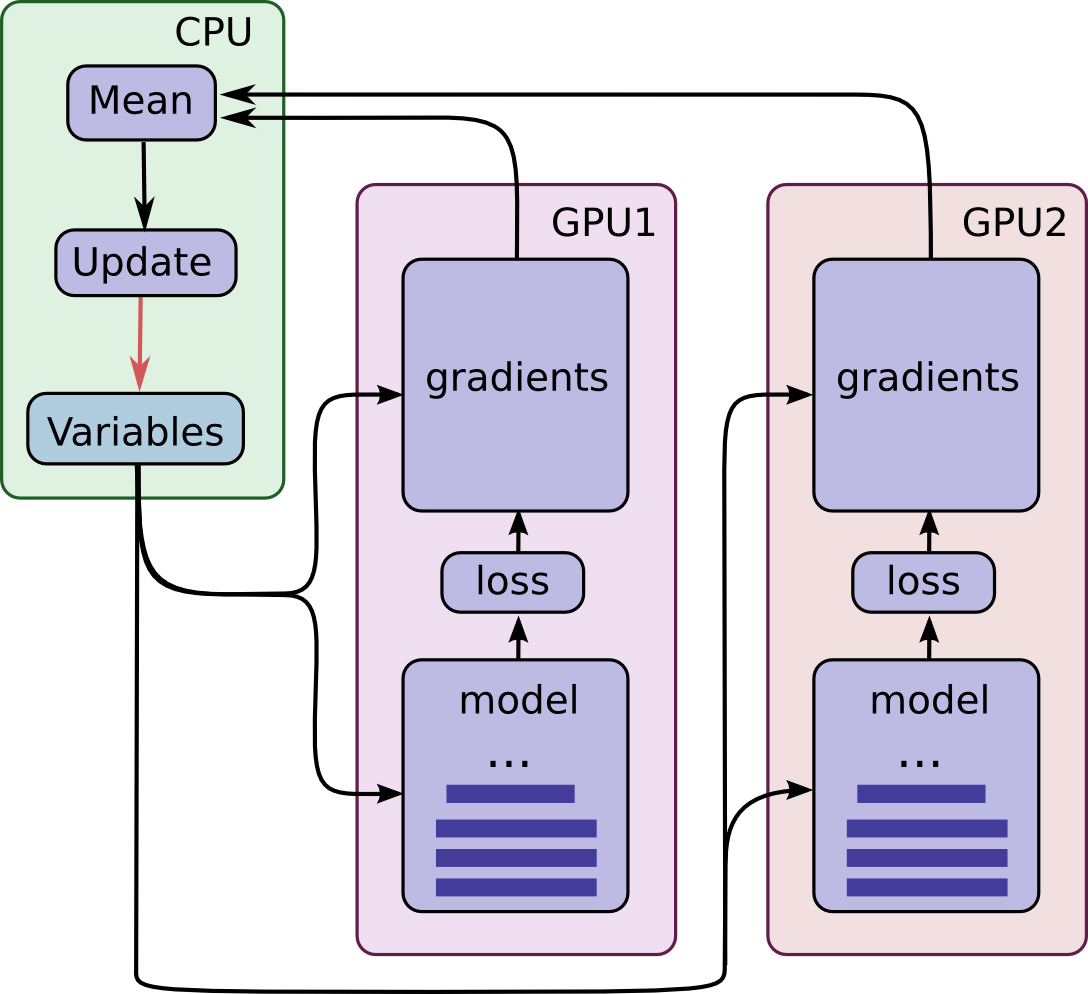

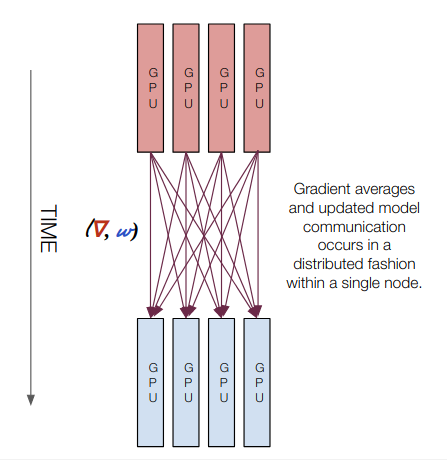

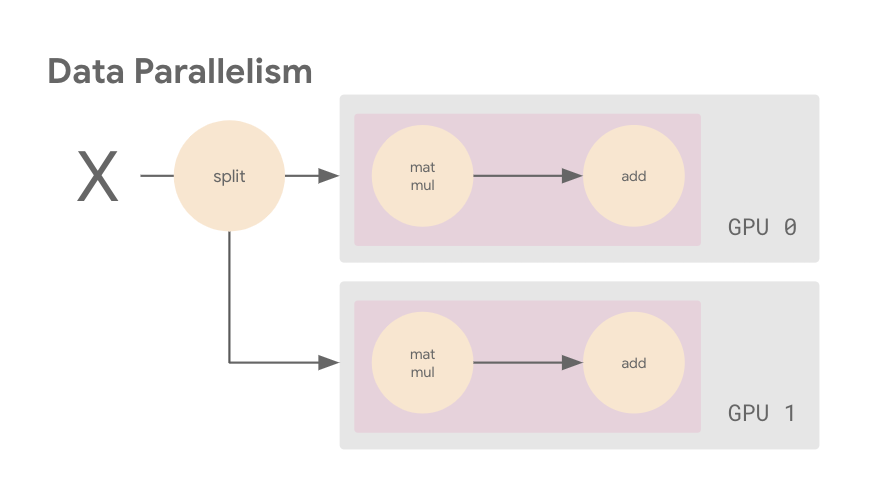

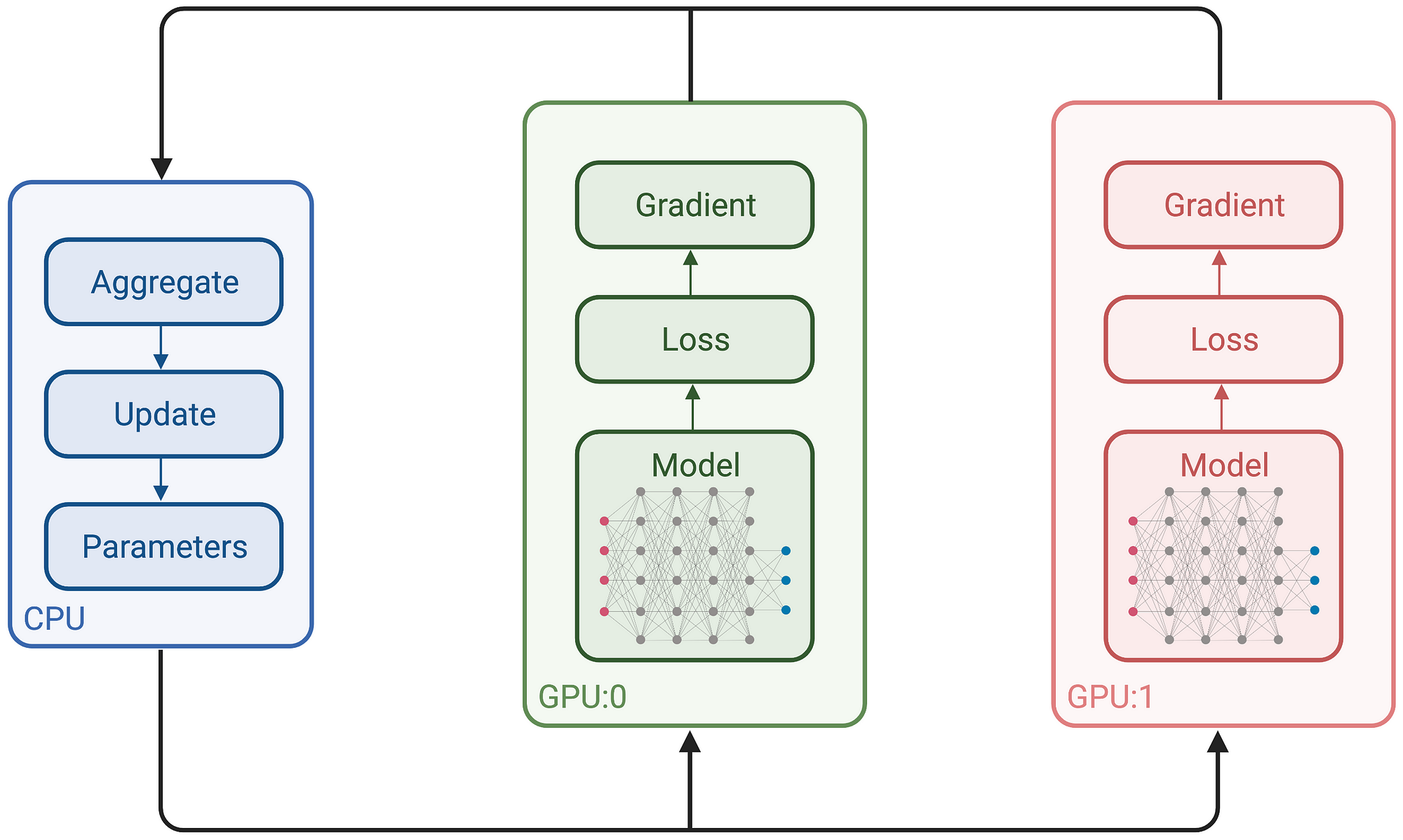

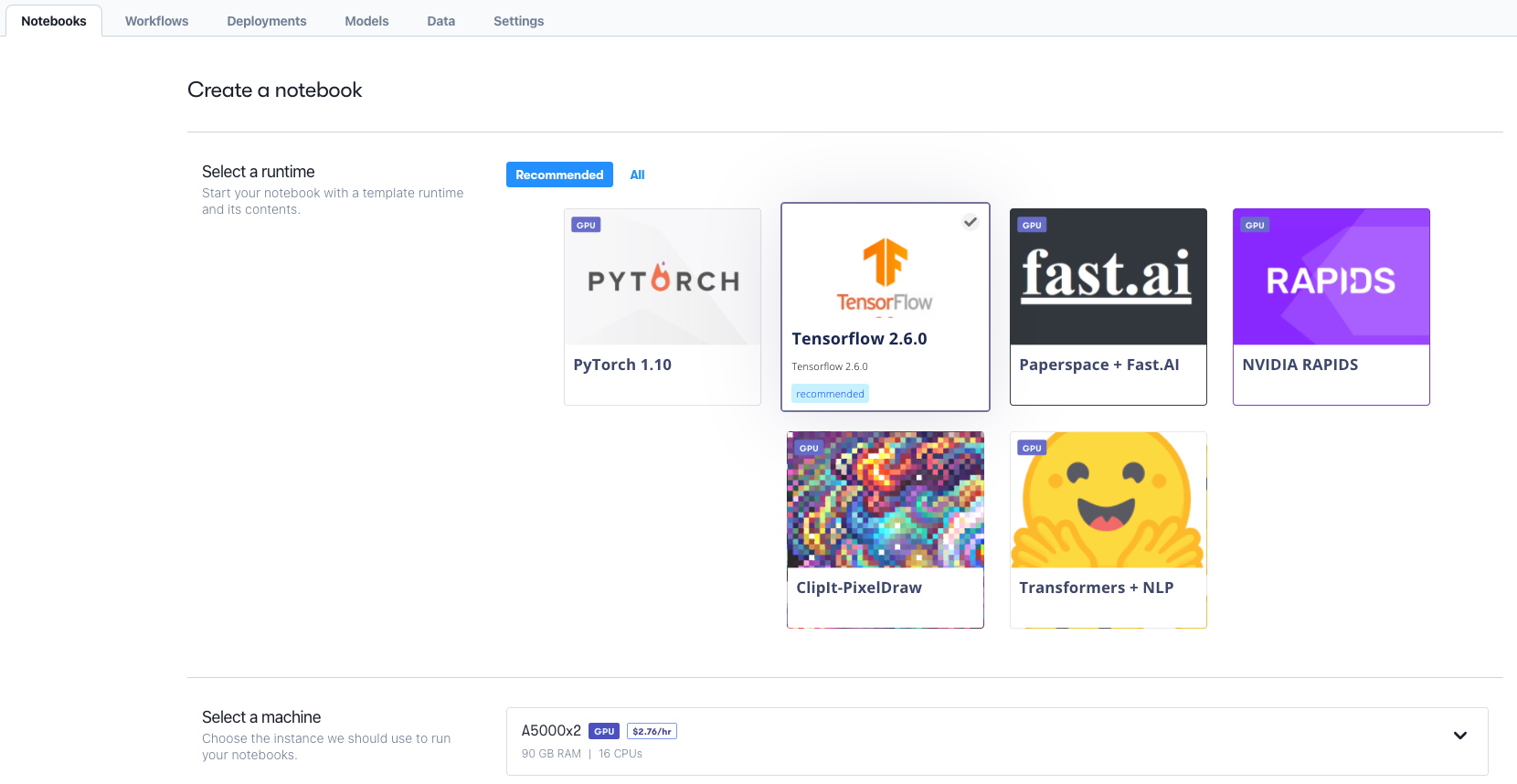

Deep Learning Frameworks for Parallel and Distributed Infrastructures | by Jordi TORRES.AI | Towards Data Science

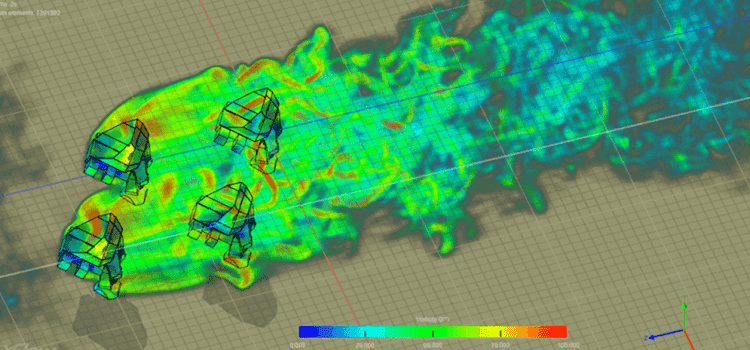

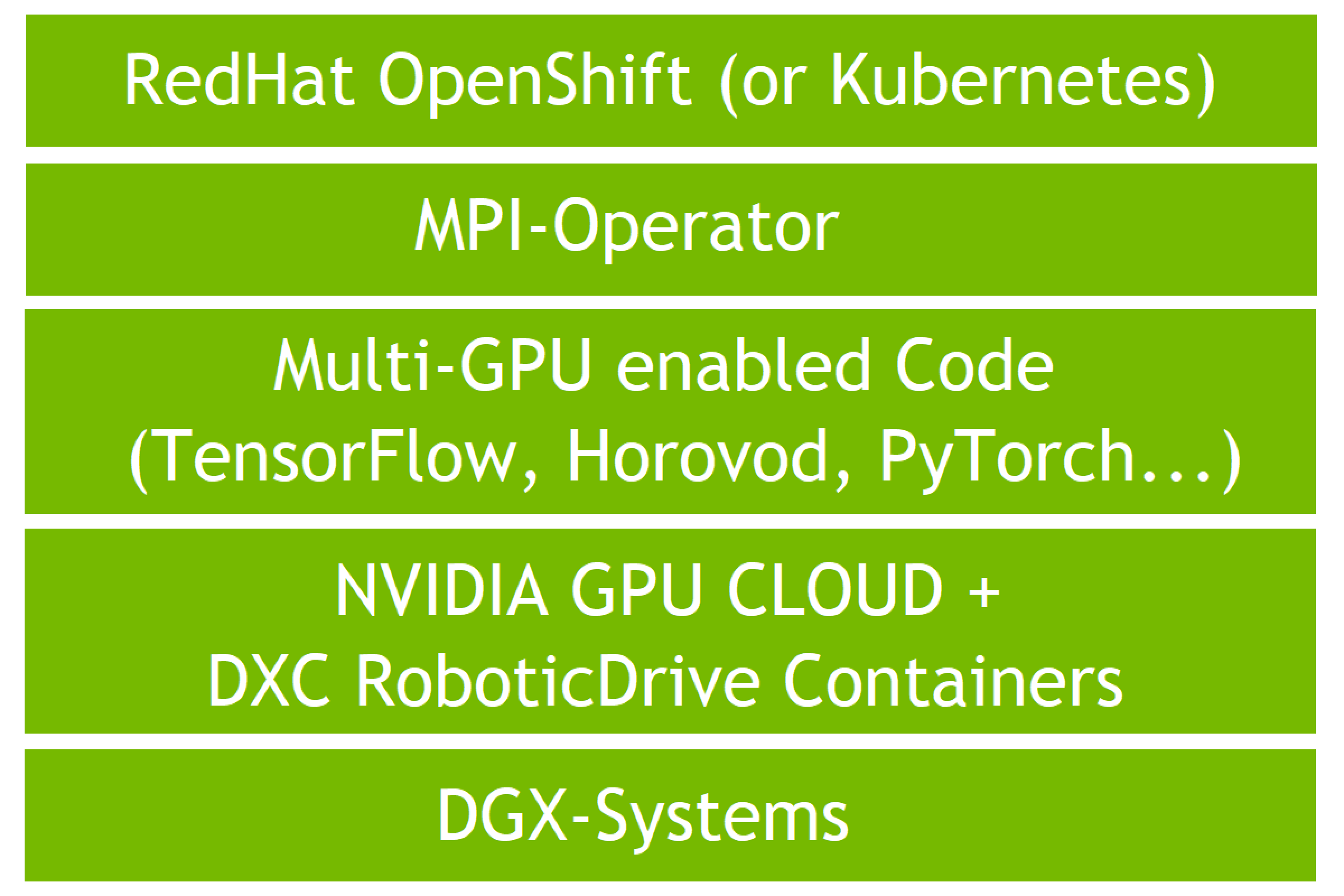

Validating Distributed Multi-Node Autonomous Vehicle AI Training with NVIDIA DGX Systems on OpenShift with DXC Robotic Drive | NVIDIA Technical Blog